Finding Distinct Values Quickly

Background

Back in 2014, I wrote an article called Performance Tuning the Whole Query Plan. It looked at ways to find a relatively small number of distinct values from a moderately large dataset, and concluded that a recursive solution could be optimal. This follow-up post revisits the question for SQL Server 2019, using a larger number of rows.

Test Environment

I will be using the 50GB Stack Overflow 2013 database, but any large table with a low number of distinct values would do.

I will be looking for distinct values in the BountyAmount column of the dbo.Votes table, presented in bounty amount order ascending. The Votes table has just under 53 million rows (52,928,720 to be exact). There are just 19 different bounty amounts, including NULL.

The Stack Overflow 2013 database comes without nonclustered indexes to minimize download time. There is a clustered primary key index on the Id column of the dbo.Votes table. It comes set to SQL Server 2008 compatibility (level 100), but we will start with a more modern setting of SQL Server 2017 (level 140):

ALTER DATABASE StackOverflow2013

SET COMPATIBILITY_LEVEL = 140;The tests were performed on my laptop using SQL Server 2019 CU 2. This machine has four i7 CPUs (hyperthreaded to 8) with a base speed of 2.4GHz. It has 32GB RAM, with 24GB available to the SQL Server 2019 instance. The cost threshold for parallelism is set to 50.

Each test result represents the best of ten runs, with all required data and index pages in memory.

1. Row Store Clustered Index

To set a baseline, the first run is a serial query without any new indexing (and remember this is with the database set to compatibility level 140):

SELECT DISTINCT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY

V.BountyAmount

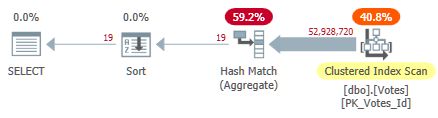

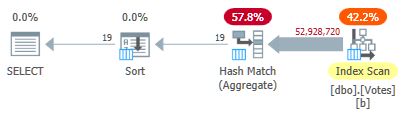

OPTION (MAXDOP 1);This scans the clustered index and uses a row-mode hash aggregate to find the distinct values of BountyAmount:

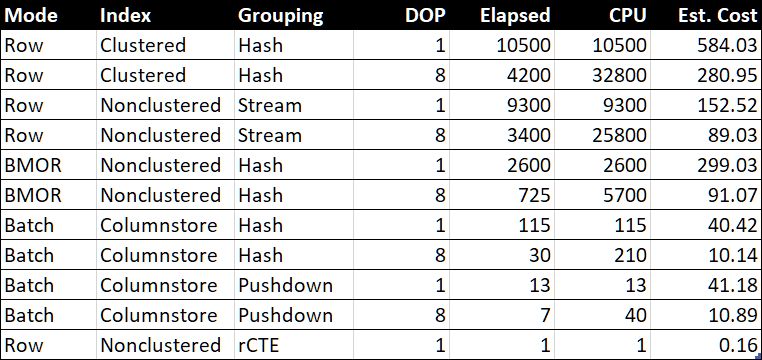

This takes 10,500ms to complete, using the same amount of CPU time. Remember this is the best time over ten runs with all the data in memory. Automatically-created sampled statistics on the BountyAmount column were created on the first run.

About half of the elapsed time is spent on the Clustered Index Scan, and about half on the Hash Match Aggregate. The Sort only has 19 rows to process, so it consumes only 1ms or so. All the operators in this plan use row mode execution.

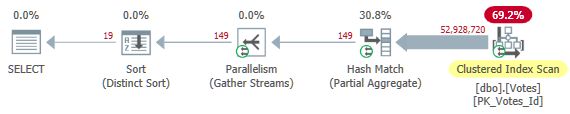

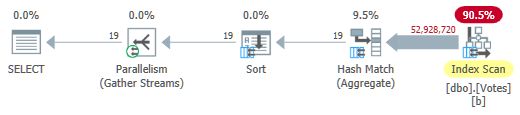

Removing the MAXDOP 1 hint gives a parallel plan:

This is the plan the optimizer chooses without any hints in my configuration. It returns results in 4,200ms using a total of 32,800ms of CPU (at DOP 8).

2. Nonclustered Index

Scanning the whole table to find just the BountyAmount seems inefficient, so it is natural to try adding a nonclustered index on just the one column this query needs:

CREATE NONCLUSTERED INDEX b

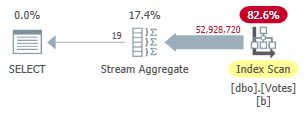

ON dbo.Votes (BountyAmount);This index takes a while to create (1m 40s). The MAXDOP 1 query now uses a Stream Aggregate because the optimizer can use the nonclustered index to present rows in BountyAmount order:

This runs for 9,300ms (consuming the same amount of CPU time). A useful improvement on the original 10,500ms but hardly earth-shattering.

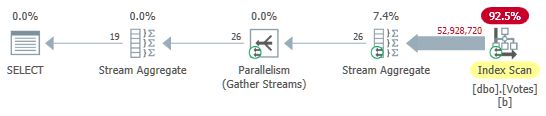

Removing the MAXDOP 1 hint gives a parallel plan with local (per-thread) aggregation:

This executes in 3,400ms using 25,800ms of CPU time. We might be able to better with row or page compression on the new index, but I want to move on to more interesting options.

3. Batch Mode on Row Store (BMOR)

Now set the database to SQL Server 2019 compatibility mode using:

ALTER DATABASE StackOverflow2013

SET COMPATIBILITY_LEVEL = 150;This gives the optimizer freedom to choose batch mode on row store if it deems it worthwhile. This can provide some of the benefits of batch mode execution without requiring a column store index. For deep technical details and undocumented options, see Dmitry Pilugin’s excellent article on the subject.

Unfortunately, the optimizer still chooses fully row mode execution using stream aggregates for both the serial and parallel test queries. In order to get a batch mode on row store execution plan, we can add a hint to encourage aggregation using hash match (which can run in batch mode):

SELECT DISTINCT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY

V.BountyAmount

OPTION (HASH GROUP, MAXDOP 1);This gives us a plan with all operators running in batch mode:

Results are returned in 2,600ms (all CPU as usual). This is already faster than the parallel row mode plan (3,400ms elapsed) while using far less CPU (2,600ms versus 25,800ms).

Removing the MAXDOP 1 hint (but keeping HASH GROUP) gives a parallel batch mode on row store plan:

This runs in just 725ms using 5,700ms of CPU.

4. Batch Mode on Column Store

The parallel batch mode on row store result is an impressive improvement. We can do even better by providing a column store representation of the data. To keep everything else the same, I will add a nonclustered column store index on just the column we need:

CREATE NONCLUSTERED COLUMNSTORE INDEX nb

ON dbo.Votes (BountyAmount);This is populated from the existing b-tree nonclustered index and takes only 15s to create.

SELECT DISTINCT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY V.BountyAmount

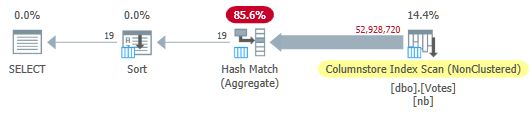

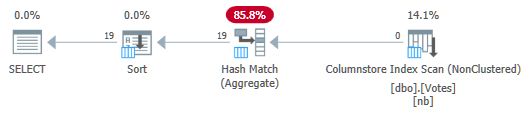

OPTION (MAXDOP 1);The optimizer chooses a fully batch mode plan including a column store index scan:

This runs in 115ms using the same amount of CPU time. The optimizer chooses this plan without any hints on my system configuration because the estimated cost of the plan is below the cost threshold for parallelism.

To get a parallel plan, we can either reduce the cost threshold or use an undocumented hint:

SELECT DISTINCT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY

V.BountyAmount

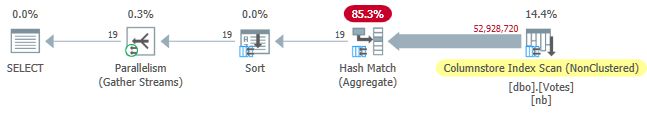

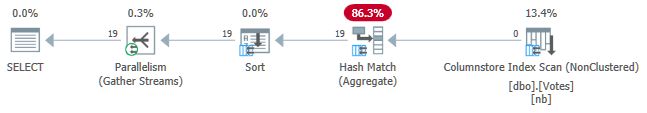

OPTION (USE HINT ('ENABLE_PARALLEL_PLAN_PREFERENCE'));In any case, the parallel plan is:

Query elapsed time is now down to 30ms, while consuming 210ms of CPU.

5. Batch Mode on Column Store with Push Down

The current best execution time of 30ms is impressive, especially when compared to the original 10,500ms. Nevertheless, it is a bit of shame that we have to pass nearly 53 million rows (in 58,868 batches) from the Scan to the Hash Match Aggregate.

It would be nice if SQL Server could push the aggregation down into the scan and just return distinct values from the column store directly. You might think we need to express the DISTINCT as a GROUP BY to get Grouped Aggregate Pushdown, but that is logically redundant and not the whole story in any case.

With the current SQL Server implementation, we actually need to compute an aggregate to activate aggregate pushdown. More than that, we need to use the aggregate result somehow, or the optimizer will just remove it as unnecessary.

One way to write the query to achieve aggregate pushdown is to add a logically redundant secondary ordering requirement:

SELECT

V.BountyAmount

FROM dbo.Votes AS V

GROUP BY

V.BountyAmount

ORDER BY

V.BountyAmount,

COUNT_BIG(*) -- New!

OPTION (MAXDOP 1);The serial plan is now:

Notice that no rows are passed from the Scan to the Aggregate! Under the covers, partial aggregates of the BountyAmount values and their associated row counts are passed to the Hash Match Aggregate, which sums the partial aggregates to form the required final (global) aggregate. This is very efficient, as confirmed by the elapsed time of 13ms (all of which is CPU time). As a reminder, the previous serial plan took 115ms.

To complete the set, we can get a parallel version in the same way as before:

This runs for 7ms using 40ms of CPU in total.

It is shame we need to compute and use an aggregate we don’t need just to get push down. Perhaps this will be improved in future so that DISTINCT and GROUP BY without aggregates can be pushed down to the scan.

6. Row Mode Recursive Common Table Expression

At the beginning, I promised to revisit the recursive CTE solution used to find a small number of duplicates in a large data set. Implementing the current requirement using that technique is quite straightforward, though the code is necessarily longer than anything we have seen up to this point:

WITH R AS

(

-- Anchor

SELECT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY

V.BountyAmount ASC

OFFSET 0 ROWS

FETCH FIRST 1 ROW ONLY

UNION ALL

-- Recursive

SELECT

Q1.BountyAmount

FROM

(

SELECT

V.BountyAmount,

rn = ROW_NUMBER() OVER (

ORDER BY V.BountyAmount ASC)

FROM R

JOIN dbo.Votes AS V

ON V.BountyAmount > ISNULL(R.BountyAmount, -1)

) AS Q1

WHERE

Q1.rn = 1

)

SELECT

R.BountyAmount

FROM R

ORDER BY

R.BountyAmount ASC

OPTION (MAXRECURSION 0);The idea has its roots in a so-called index skip scan: We find the lowest value of interest at the beginning of the ascending-ordered b-tree index, then seek to find the next value in index order, and so on. The structure of a b-tree index makes finding the next highest value very efficient—there is no need to scan through the duplicates.

The only real trick here is convincing the optimizer to allow us to use TOP in the ‘recursive’ part of the CTE to return one copy of each distinct value. Please see my previous article if you need a refresher on the details.

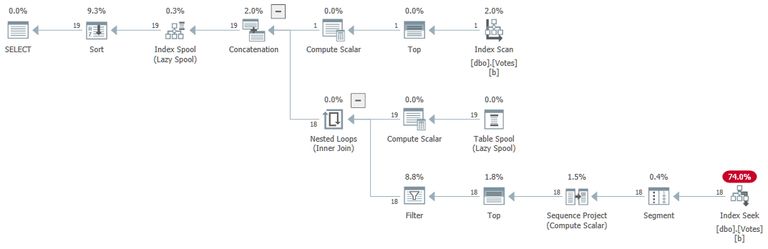

The execution plan (explained in general by Craig Freedman here) is:

The query returns correct results in 1ms using 1ms of CPU, according to Sentry One Plan Explorer.

7. Iterative T-SQL

Similar logic can be expressed using a WHILE loop. The code may be easier to read and understand than the recursive CTE. It also avoids having to use tricks to work around the many restrictions on the recursive part of a CTE. Performance is competitive at around 15ms. This code is provided for interest and is not included in the performance summary table.

SET NOCOUNT ON;

SET STATISTICS XML OFF;

DECLARE @Result table

(

BountyAmount integer NULL UNIQUE CLUSTERED

);

DECLARE @BountyAmount integer;

-- First value in index order

WITH U AS

(

SELECT

V.BountyAmount

FROM dbo.Votes AS V

ORDER BY

V.BountyAmount ASC

OFFSET 0 ROWS

FETCH NEXT 1 ROW ONLY

)

UPDATE U

SET @BountyAmount = U.BountyAmount

OUTPUT Inserted.BountyAmount

INTO @Result (BountyAmount);

-- Next higher value

WHILE @@ROWCOUNT > 0

BEGIN

WITH U AS

(

SELECT

V.BountyAmount

FROM dbo.Votes AS V

WHERE

V.BountyAmount > ISNULL(@BountyAmount, -1)

ORDER BY

V.BountyAmount ASC

OFFSET 0 ROWS

FETCH NEXT 1 ROW ONLY

)

UPDATE U

SET @BountyAmount = U.BountyAmount

OUTPUT Inserted.BountyAmount

INTO @Result (BountyAmount);

END;

-- Accumulated results

SELECT

R.BountyAmount

FROM @Result AS R

ORDER BY

R.BountyAmount;Performance Summary Table

The “Est. Cost” column shows the optimizer’s cost estimate for each query as reported on the test system.

Final Thoughts

Finding a small number of distinct values might seem like quite a specific requirement, but I have come across it fairly frequently over the years, usually as part of tuning a larger query.

The last several examples were quite close in performance. Many people would be happy with any of the sub-second results, depending on priorities. Even the serial batch mode on row store result of 2,600ms compares well with the best parallel row mode plans, which bodes well for significant speed-ups just by upgrading to SQL Server 2019 and enabling database compatibility level 150. Not everyone will be able to move to column store storage quickly, and it won’t always be the right solution anyway. Batch mode on row store provides a neat way to achieve some of the gains possible with column stores, assuming you can convince the optimizer to choose to use it.

The parallel column store aggregate push down result of 57 million rows processed in 7ms (using 40ms of CPU) is remarkable, especially considering the hardware. The serial aggregate push down result of 13ms is equally impressive. It would be great if I hadn’t had to add a meaningless aggregate result to get these plans.

For those who cannot yet make the move to SQL Server 2019 or column store storage, the recursive CTE is still viable and efficient solution when a suitable b-tree index exists, and the number of distinct values needed is guaranteed to be quite small. It would be neat if SQL Server could access a b-tree like this without needing to write a recursive CTE (or equivalent iterative looping T-SQL code using WHILE).

Another possible solution for the problem is to create an indexed view. This will provide distinct values with great efficiency. The down side, as usual, is that each change to the underlying table will need to update the row count stored in the materialized view.

Each of the solutions presented here has its place, depending on the requirements. Having a wide range of tools available is generally speaking a good thing when tuning queries. Most of the time, I would choose one of the batch mode solutions because their performance will be quite predictable no matter how many duplicates are present.

Thanks for reading.